Part 3: OMG! Not another digital transformation article! Is it about effecting risk management and change management?

Abstract

Humans have used technology to transform their societies from prehistoric times up to the present. Society begrudgingly accepted the transformative changes, yet the changes moved society forward. Now information technologies and the information revolution are again transforming society. The COVID-19 pandemic further accelerated the transformation from many years to just a couple of years. This 3-part series discusses digital transformation (DT) from several perspectives. Part 1 discussed the key DT business drivers, concepts, and technology trends, how they are transforming organizations and society, and how they are affecting business users and customers. Some technology trends such as real-time data analytics are ongoing, while others are more recent, such as blockchain.

Part 2 discussed the critical factors for a successful DT journey, because organizations need to do a “mind-shift” from traditional records and information management (RIM) practices to content services (CS). This means imagining the “art of the possible” for a new future using a cloud computer model to deliver transformative change. This includes defining the product scope of the DT journey and the digital products and services that will deliver transformative change for a new future.

Here, Part 3 discusses how to manage the various DT risks. One essential step is developing the DT business case and connecting it with the critical success factors (CSFs) and the product scope. This discussion includes methods, tools, and techniques such as using personae and identifying use cases that have high business value, while minimizing project risks. This part also discusses managing CS risks such as ransomware, privacy, change management, and user adoption. Finally, Part 3 looks to the future, presents next steps, and discusses key takeaways.

Introduction

The previous article –Part 2 of this 3-part series – discussed DT by imagining how the “art of the possible” can help define the end state of the DT journey. However, imagination is not enough to realize the end state. Understanding the CSF is necessary in order to define the scope of the DT initiative before starting the journey.

DT means changing the current processes, polices, and technologies, which naturally leads to uncertainty about the future state. The uncertainty can result in a perception of risk – empirical or conjectural. Many narratives that deal with change, strategy, transformation, and implementation involve discussing risk. “In today’s environment, organizations and companies face a plethora of uncertainties. The situation exposes the organizations and companies to increased risk. Therefore, the organizations and companies must take precautions to manage the uncertainties and their consequential risks … of media scrutiny, public relations, financial loss, criminal and / or civil litigation, bankruptcy, etc.” (Srivastav 2014, p 18).

Risk Management and DT Experience

Uncertainty and risk are not the same. In 1921, Frank Knight (1921) said “Uncertainty must be taken in a sense … distinct from the familiar notion of Risk [sic] … The essential fact is that ‘risk’ means in some cases a quantity susceptible of measurement” (p 19-20). Thus, common tools and techniques are risk matrices, risk registers, risk logs, risk breakdown structures, risk categories, Monte Carlo simulations, and sensitivity analyses. While this quantitative approach is important, risk assessment also has a qualitative element based on experience and sometimes even “gut feel.” So, risk management is both quantitative and qualitative.

That is why organizations hire consultants who have the knowledge to consider a range of scenarios and the risk response to each, depending on the scope of the DT initiative. Take the example of the pandemic: only some governments engaged pandemic experts to develop actions plans. In fact, “the Independent Panel for Pandemic Preparedness and Response, pointedly noted that since the H1N1 pandemic in 2009, there have been 11 high-level commissions and panels that produced more than 16 reports, with the vast majority of recommendations never implemented” (Fink 2021). Now, armed with lessons learned, the World Health Organization (W.H.O) has released two reports to prepare for the next pandemic.

Consider another example: the 1972 Summer Olympics in Munich, when terrorists took Israeli athletes hostage. “Commissioned by organizers to predict worst-case scenarios for the Munich games, [Georg] Sieber came up with a range of possibilities, from explosions to plane crashes, for which security teams should be prepared. Situation Number 21 was eerily prescient … Sieber envisioned that “a dozen armed Palestinians would scale the perimeter fence of the [Olympic] Village. They would invade the building that housed the Israeli delegation, kill a hostage or two … then demand the release of prisoners held in Israeli jails and a plane to fly to some Arab capital.” The West German organizers balked, asking Sieber to downsize his projections from cataclysmic to merely disorderly — from worst-case to simply bad-case scenarios.” (Latson 2014) Unfortunately, the events unfolded almost exactly like the scenario in Situation Number 21. The organizers ignored this scenario during the planning stage because the uncertainty seemed remote. Therefore, the risk did not seem to matter.

The point is that organizations must engage subject matter experts in an effort to understand uncertainties and identify the risks that matter.

Developing the DT Business Case

The success of a DT initiative relies heavily on presenting a good business case or multiple business cases throughout the journey – as the organization and technologies evolve. The initiative can be narrow in scope – for example, to address a line of business or a technology solution – or very broad, as in using DT to improve the capability and maturity of the organization’s compliance processes.

The business case must provide the rationale for the initiative, the problem definition, the current state, and the vision of the future state, for example. As well, it must define the initiative’s benefits, costs, risks, and impacts. Many organizations have business case templates. If not, then many are available on the internet.

- Linking Scope to the Business Use Cases

The business case must link the success factors and product scope to the initiative’s outcomes and benefits. One approach is to identify personas of business users to determine their needs. Creating personas can be time consuming and complex, but they can be the foundation for good requirements

and user experience. Common personas are executives, directors, senior managers, financial analysts, and more.

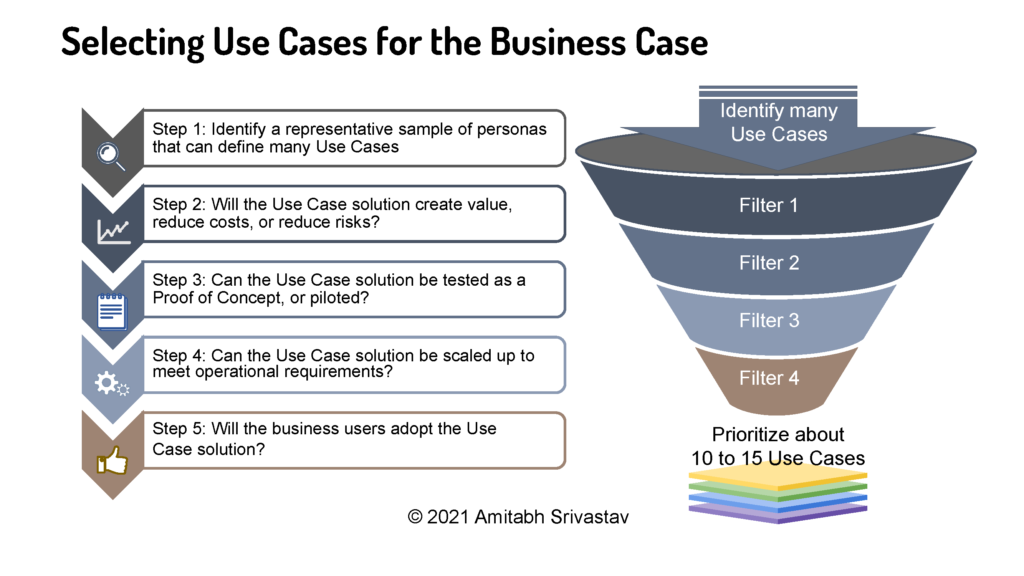

Figure 1 illustrates a five-step process for selecting and prioritizing the use cases. The five-step process helps to filter and prioritize which use cases to include in the business case. Note that the size of the organization, the focus of the initative, the schedule, and budget will influence the final number of use cases that are included.

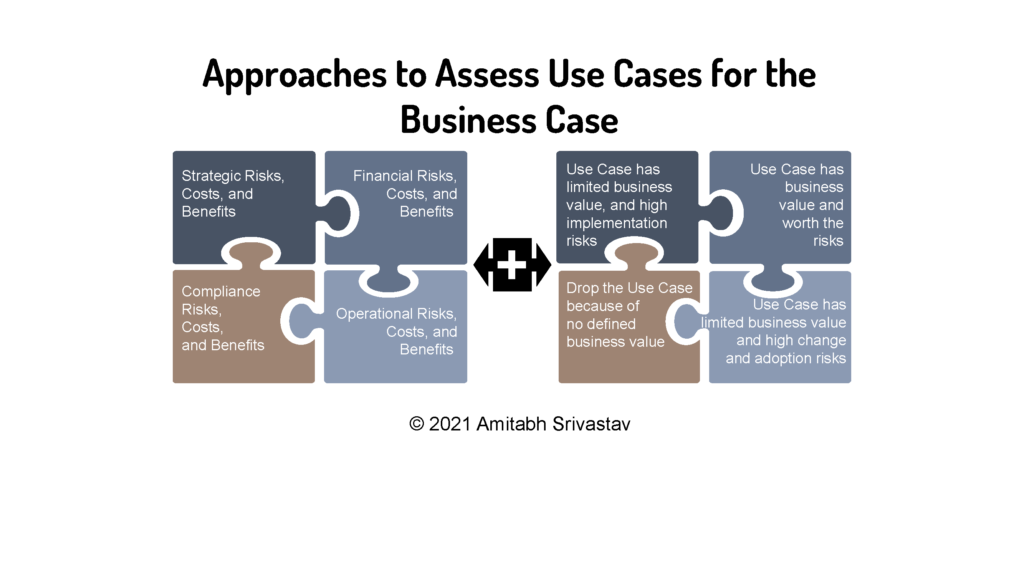

This involves assessing the costs and benefits of each use case. For example, the business value of each use case may seem strong but in fact the use case might have limited value or high implementation or adoption risks. Thus, it is necessary to spend the time and effort to find the best fit among the use cases, as illustrated in Figure 2. These two approaches can help guide whether the use cases can help assess risk or the business value. When assessing the risks, consider them as inter-related, not discrete. Similarly, when assessing the business value of the use cases, consider them as inter-related.

The intent of this analysis is to link the scope and the CSFs with the use cases into a succinct narrative describing the new DT capabilities to deliver products and services.

- DT Capabilities

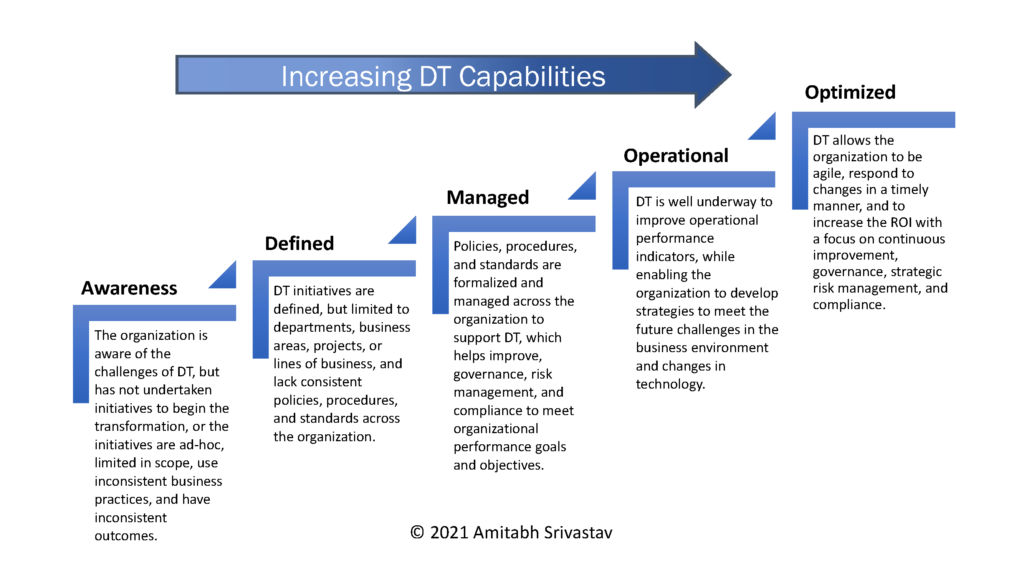

When management starts the DT journey the organization has limited capabilities to deliver products and services using DT tools. So, the organization has to reimagine its future by defining the initiative and the journey. As the journey progresses, the organization will evolve and improve its DT capabilities, define policies and procedures, and operationalize and measure performance. The end state is to use DT to optimize the use of resources, increase ROI, and improve governance, strategic risk management, and compliance, as illustrated by the five levels in Figure 3.

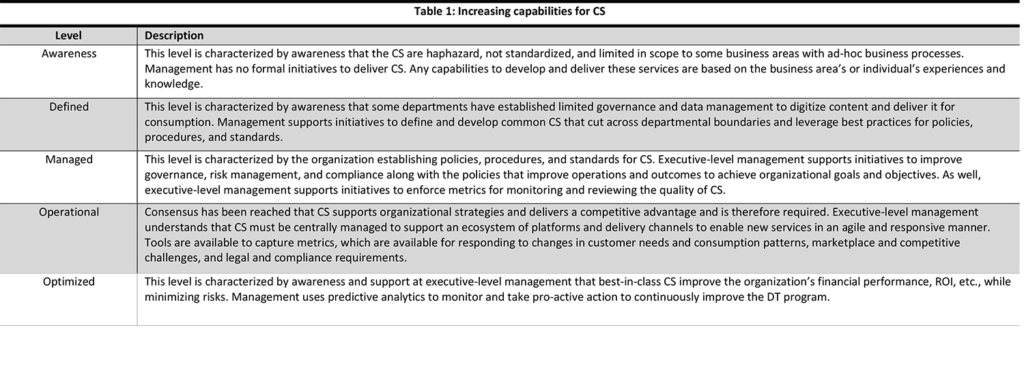

The levels aid the organization to improve its people, processes, policies, and technologies with respect to the CSF. As an example of a CSF, Table 1 defines the capabilities for CS to progress from Level 1 to Level 5.

Similar capabilities will describe the other CSFs discussed in Part 2. The business case needs to explore the risks and balance them with the benefits.

Risk Management for CS

The term enterprise content management (ECM) has taken years to enter into management’s vernacular, yet there is confusion about the term’s definition, how ECM applies to the organization, and what pain points it will resolve. Depending on one’s role, the ECM solution might mean a content management system (CMS), web content management (WCM), knowledge management system (KMS), document management systems (DMS), and electronic documents and records management system (EDRMS). Some even confuse ECM is with information lifecycle management (ILM) and content lifecycle management (CLM), DAM, DRM, and enterprise file sync and share (EFSS) solutions. Yet others see ECM as an umbrella term consisting of the above technologies and solutions, and new ones as discussed in Part 1. This confusion can increase the risk of failure of the DT initiative.

- Customer Experience Risks

In 2016 the term “content services” entered management’s vernacular when Gartner redefined ECM to focus on CS applications, platforms, and components (Shegda, et al. 2016). This shifted the focus from how content is managed in a single repository to how content is used by internal and external business users. This includes creating, collaborating, transforming, and leveraging content to gain insight – how users save, search, and share content. This shift further confused the narrative for management and increased the risk of DT not delivering the reimagined future. Thus, management must be crystal clear when it comes to managing the CS risks. “It’s strategic, rather than technology oriented and provides an evolved way of thinking about how to solve content related problems. It blends the reality of what is happening now in the digital enterprise and the emerging technology of the near future” (Woodbridge 2017). Nevertheless, the term ECM is still recognized and widely used, co-existing with CS.

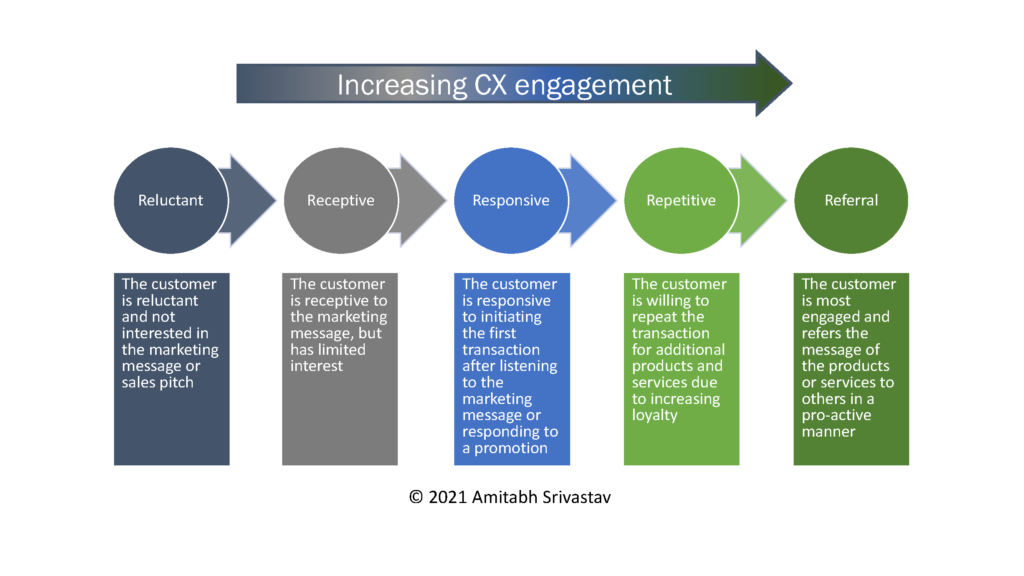

Part 2 discussed customer experience (CX) as a CSF for DT. Thus, managing CX risks is essential to delivering products and services anytime, anywhere, to any device. This means engaging customers and turning them into repeat customers. There are many customer acquisition, engagement, and retention models, as well as studies describing the costs of finding new customers versus retaining them. One such model is illustrated in Figure 4. The five steps converting a reluctant customer into a loyal customer who refers others to a product or service.

Therefore, CX must be more comprehensive than merely the value of the sales team hitting its quota, up-selling, or cross-selling. CX is about consistently delivering a high-quality experience at every touchpoint and across every channel.

- Cloud-first “Mind-shift”

A cloud-first “mind-shift” implies using a cloud computing model, which exposes an organization to risks on the Internet, where data is considered “digital gold” that cyber criminals want to steal. Therefore, organizations must protect themselves from cyber risks and attacks such as:

- Denial-of-services (DoS) and distributed denial-of-service (DDoS)

- Phishing and spear phishing

- Man-in-the-middle (MitM)

- SQL injection

- Cross-site scripting (XSS)

- Ransomware

A sinister variation of the SaaS model is Ransomware as a Service (RaaS), in which criminals on the dark web rent the service in order to launch attacks on targets. “RaaS is a new business model for ransomware developers. Like software as a service (SaaS), the ransomware developers sell or lease their ransomware variants to affiliates who then use them to perform an attack” (Midler 2020). In May 2021, Colonial Pipeline paid $ 4.4 million to DarkSide, an organization that provides RaaS to partners to carry out actual attacks. In this case, U.S. law enforcement agencies recovered most of the bitcoin paid in ransomware.

Cyber criminals have even outsourced their development to ransomware developers, who in turn have refined their processes. “Ransomware tends to be well organized, providing each victim with unique identifiers, which are then used to deliver the correct decryption keys … The software is also designed to be easy to use, even by a non-technical person, and is often available in multiple languages” (Midler et al., 2020, p 6).

Moving to a cloud computing model may involve migrating data to the cloud; what and when to migrate will depend on factors that, in turn, will depend on the scope of the DT initiative and the CSF. The Proof of Concept (PoC) can help inform the approach to develop the data migration strategy. For example, when considering a mission-critical system, one of the PoCs should deal with only data migration. The PoC can help determine if a day-forward, backfile migration, or on-demand migration is the best strategy for mitigating data loss and down time risks during migration. This use case could apply to financial applications and data. On the other hand, using a cloud service for a new business solution that is not mission-critical may not require any data migration. The PoC may focus on user adoption of new tools and processes, reports, inter-operability with existing systems, threat risk assessment, security, and privacy, depending on the data that will eventually be stored in the cloud. This use case could apply to a new customer data application using AI that will access current shipping data to generate reports for trend analysis and predictive analytics.

One aspect of CS that are not given much consideration is archival services and long-term retention. These can play a significant role if part of the DT initiative is to digitize large volumes of archival records and make them available online.

Archival services imply long-term storage of content such as pension records, life insurance policies, real-estate transactions, magazines, and much more. This content has a retention period considered permanent. However, the technology to store digital content is changing continuously – in other words, technology obsolescence. Therefore, long-term access to digital content is a technology and operational risk. Another archival risk is degradation of digital storage media. This can happen when a “bit flips” from a “0” to “1” or vice-versa, resulting in data corruption.

The risk analysis should address long-term storage for archival services and identify the next steps to develop a strategy for digital preservation because “electronic archiving considerations transcend the life of systems and are system independent; they need to be assessed in a specific longer-term strategic framework … for permanent or long-term retention” (ICA 2008, p 6).

- Jurisdiction and Privacy Data Risks

Note: the following discussion regarding jurisdictional and privacy risks should NOT be considered as legal advice. Readers should seek legal advice for their questions.

Organizations must be clear when negotiating with cloud providers where the data can be stored and not stored when using IaaS or PaaS – and in some cases where the data is processed when using SaaS.

This raises the consideration of data-at-rest – that is, when the data is not being processed. Consider the scenario of Cloud Provider X that is domiciled in Country A but owns a data center in Country B. The authorities in Country A could legally force the provider to release data to authorities there, regardless of Country B’s sovereignty of the data. However, if the provider only rents the facilities from Company Y, which is domiciled in Country B, then does Company Y or Provider X own the data? The sovereignty of Country B could override Country A’s legal requirement for the data. Of course, that is for the lawyers and the legal system to resolve.

Now consider the same scenario as above, but now the data is processed by Company Z using its SaaS that is hosted in a data center in Country C. Company Z might store the data in Country C for faster processing, reducing latency, logging transactions, and so on. So, which country has sovereignty of the data and at which point in time? It is clear that jurisdictional risk related to data residency and data sovereignty are complicated legal affairs that may result in lengthy and costly litigation (TBS 2016, Frank 2016).

Tangential to data residency and data sovereignty is retrieving data from cloud repositories and SaaS applications in a different jurisdiction if the organization is involved in litigation with a cloud provider or SaaS provider or in a dispute with local authorities. The data might not be available for a long period, which would be an operational risk. In fact, this same scenario may also occur with a cloud or service provider operating in the same jurisdiction as the organization. Again, the data might not be available for some time and thereby the organization’s operations are at risk.

Mobile devices allow users to access content from anywhere, which presents security and privacy risks. Mobile device management (MDM[1]) is critical because devices may contain personal and business content and might be collecting business and personal information. The line separating personal devices used for business and business devices used for personal reasons has become blurred. Users are vulnerable to social engineering attacks via their mobile devices. “Therefore, companies are increasingly concerned about potential cyber-attacks … and mobile applications are a component of that cyber threat landscape … [MDM] is an enterprise deployment and management scheme for mobile devices … generally comprised of policies and an application … designed to enforce security updates, reduce the risk of malware, and mitigate the risk of exposing non-public data, including personally identifiable information … and intellectual property” (Hayes, et al. 2020, p 1). The risk increases when crossing international borders such that mobile devices could be confiscated and viewed via force by local authorities.

An interesting area of jurisdictional risk that might become a material risk is related to intellectual property (IP), copyright laws, and ownership as it might apply to non-fungible tokens (NFTs) stored on blockchains in various jurisdictions. NFTs have been in the spotlight due to high-profile transactions by celebrities, sports stars, auction houses, and collectors. Time will tell whether the provenance of the IP, collectibles, or other assets when the NFTs are litigated.

Privacy continues to be a material risk for all organizations because legislation in different jurisdictions can add complexity to compliance efforts. For example, a supply chain app will store customer information of suppliers spread across jurisdictions, or a business app used by an organization’s subsidiaries in many countries will store customer information that might be available to all subsidiaries, or a social media app downloaded by users around the world will store PII. In each case, the app is storing information about customers and users in different jurisdictions with different privacy legislation. “As the owner of this information, the organization’s responsibility extends beyond its own walls to include protecting any information that a third party (e.g., payroll company, telemarketing firm, cloud storage provider) collects, manages, or stores on its behalf” (ARMA 2018, p 154).

Using Privacy by Design (PbD) principles (Cavoukian 2011) is just one approach to mitigating privacy risks. The PbD framework has been an international standard since 2010. Among the privacy and data protection legislation that incorporates PbD principles are The California Consumer Privacy Act (CCPA), Europe’s General Data Protection Regulation (GDPR), and Canada’s Personal Information Protection and Electronic Documents Act (PIPEDA).

The American Institute of Certified Public Accountants (AICPA) and the Canadian Institute of Chartered Accountants (CICA) developed the Privacy Maturity Model (PMM) based on the Generally Accepted Privacy Principles (GAPP). The PMM and GAPP are useful tools “to help management create an effective privacy program that addresses privacy risks and obligation as well as business opportunities” (AICPA/CICA 2011, p 1). With this in mind, organizations should avoid the promise of a privacy-based point solution (i.e., a specific tool or product) that resolves a singular risk, and instead focus on enterprise-wide solutions. This means performing a privacy impact assessment (PIA) to ascertain what information is necessary to collect, how long to store it, and how to dispose of it.

Finally, by using PbD, GAPP, and PMM, the organization can practice good digital ethics, which can translate into a positive image and a good reputation, and can help improve organizational performance. Organizations should look at “people, processes, policies, and technologies” in this light, and focus on:

- Improving training and awareness of privacy issues and risk

- Developing appropriate processes for collecting private information

- Updating policies to emphasize good practices for handling private information and aligning with privacy laws

- Using technologies that support the policies and incorporate PbD

- Big Data Considerations

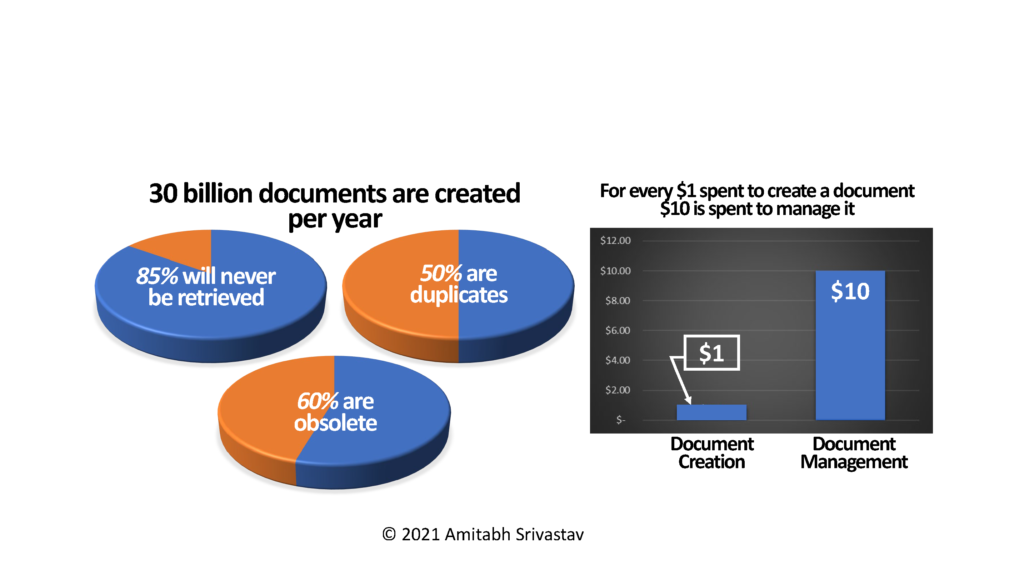

For many years, organizations were not too concerned about storing information indefinitely because its cost kept dropping, but that thinking has changed because the risk now outweighs the benefits. Research shows that the information is rarely accessed after a few years, and eventually it becomes stale. This is referred to as the data’s “shelf life.” For example, customer purchasing data or public health surveillance data may have decreasing value over time. As shown in Figure 5, a study by McKinsey Global Institute in 2011 estimated the cost to create data is $1, but the cost to manage data is $10, even as the data becomes stale. Due to the information explosion, surely these costs have increased even more!

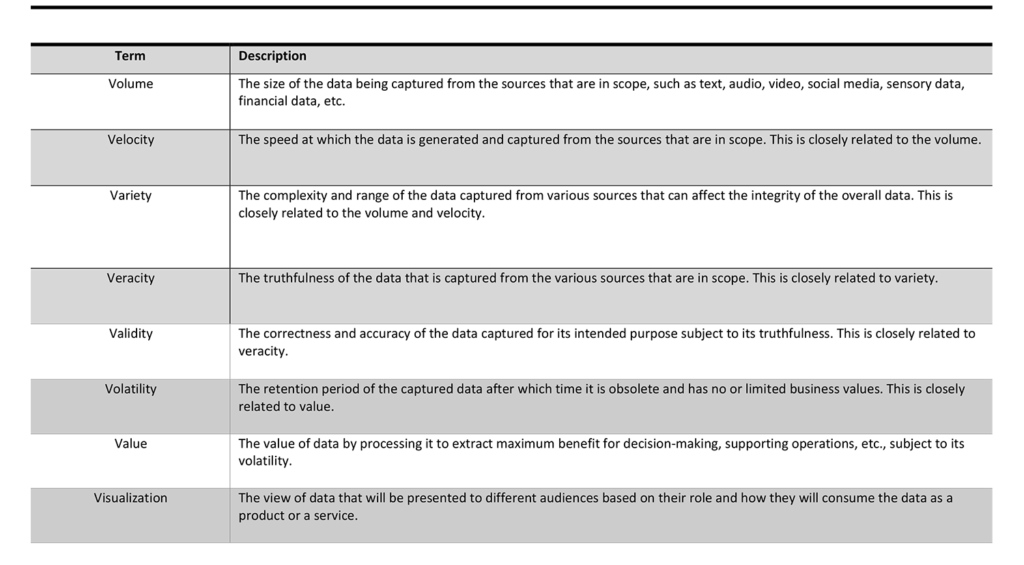

Organizations are using AI to gain insights into their huge amounts of data, which is costly. Creating insights from data is important, but organizations must ask what data to collect, why collect it, how often to collect it, and so on. One approach to asking and answering these questions is the 8 V’s of big data. The often-mentioned V’s are the volume of data, the velocity of the data and variety of the data. Khan et al (2014) includes these three, plus veracity, validity, volatility, and value. One additional V not mentioned is visualization. See Table 2 for description of the 8 V’s.

Sometimes the data needs to be processed at or near the source instead of being transmitted to the cloud and processed, with the results sent back to where it was captured. The reason is that latency of even a microsecond could be a matter of life and death. This is referred to as “edge computing.” Gartner defines edge computing as “part of a distributed computing topology where information processing is located close to the edge, where things and people produce or consume that information” (Gartner 2021). Consider the huge amount of data generated and captured with an autonomous vehicle (AV). The data must be processed immediately by computers on the AV to react to hazards. Or consider the sensors and computers on a drone. The computers must process the data and react to changes during the flight. In these scenarios edge computing implies edge AI that must react instantaneously to avoid hazards and learn at the same time via machine learning. Therefore, edge computing solutions must consider the 8 V’s of big data when processing and reacting to the data.

- Business Continuity

The pandemic certainly tested many organizations’ business continuity (BC) plans. While BC planning is not specific to DT, it does add new dimensions to BC plans when considering risks. Using a cloud-first computing model means that an organization is dependent on a cloud provider for uninterrupted service, especially during critical periods such as holiday shopping sales, playoff games, access to public services after a natural disaster, and more.

The Business Continuity Institute (BCI) defines BC planning as “documented procedures that guide organizations to respond, recover, resume and restore to a pre-defined level of operations following disruption” (BCI 2017, p 7). A well-developed and tested BC strategy and plan can help the organization manage risks during and after an incident – a cyberattack, natural disaster, fire, pandemic, and so on – as the organization responds, recovers, and resumes normal operations. The BC strategy and plan identifies, analyzes, and prioritizes:

- Potential threats and their impact on normal operations

- Risk management activities for potential threats and a framework to help ensure that key functions of the business continue to operate in the worst-case scenario and other key scenarios

- How and when all stakeholders will have access to timely and relevant information during BC activities

Not having a BC strategy and plan may affect the survivability of the organization because it will struggle to resume operations as it tries to:

- Restore mission-critical systems and access to data

- Protect physical and digital assets

- Maintain market, customer, and employee confidence

- Comply with legislation and regulations

- Secure confidential records and locate vital records

Identifying, analyzing, and prioritizing systems and functions to deliver the products and services are dependent on cloud service providers. Therefore, BC planning is a collaborative effort between the organization’s key business stakeholders and the provider’s technical team. The organization’s Business Continuity Steering Committee would lead this effort, “including making strategic policy and continuity planning decisions for the organization, and for providing the resources to accomplish all business continuity program goals” (BCI 2017, p 8).

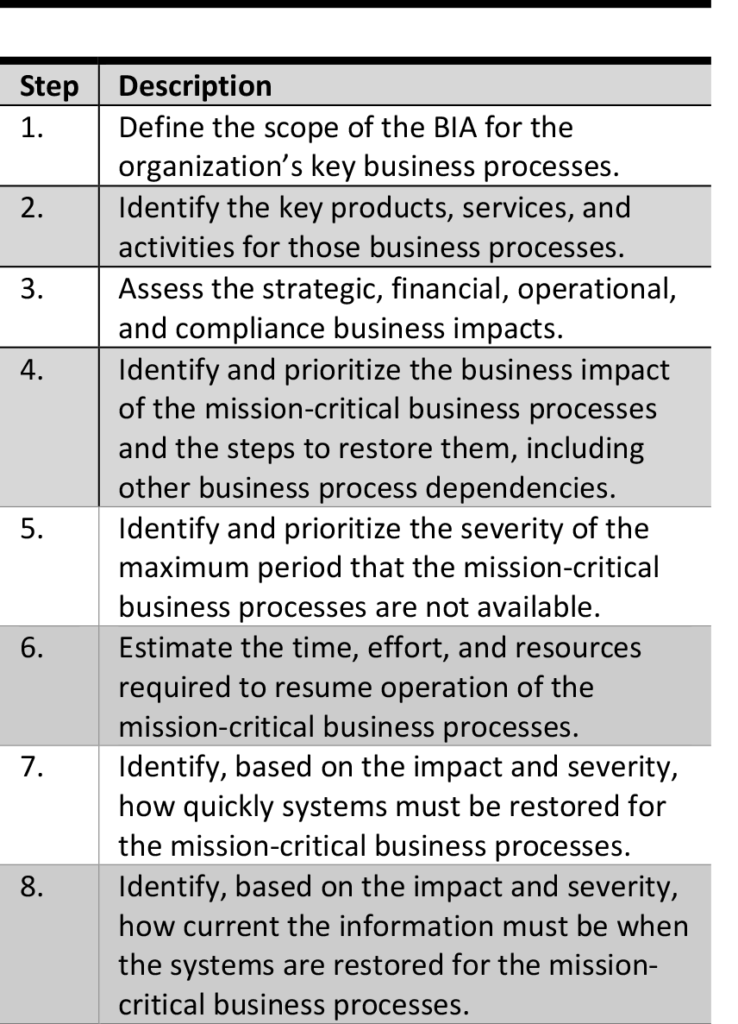

There are several inputs that go into developing a BC strategy and plan, but two key ones are business impact analysis (BIA)[2] and backup strategy. The BIA looks at key business processes and systems and should include scenario planning with key stakeholders who can identify possible incidents and responses in order to recover and resume normal operations. “Scenario analysis for risk response planning involves defining several plausible alternative scenarios. The different scenarios may require different risk responses that can be … evaluated for cost and effectiveness” (PMI 2009, p 100). It is essential to define the methodology and scope of the BIA regarding business processes. Then, the BIA will identify systems and scenarios and prioritize the responses. Table 3 describes one possible series of steps.

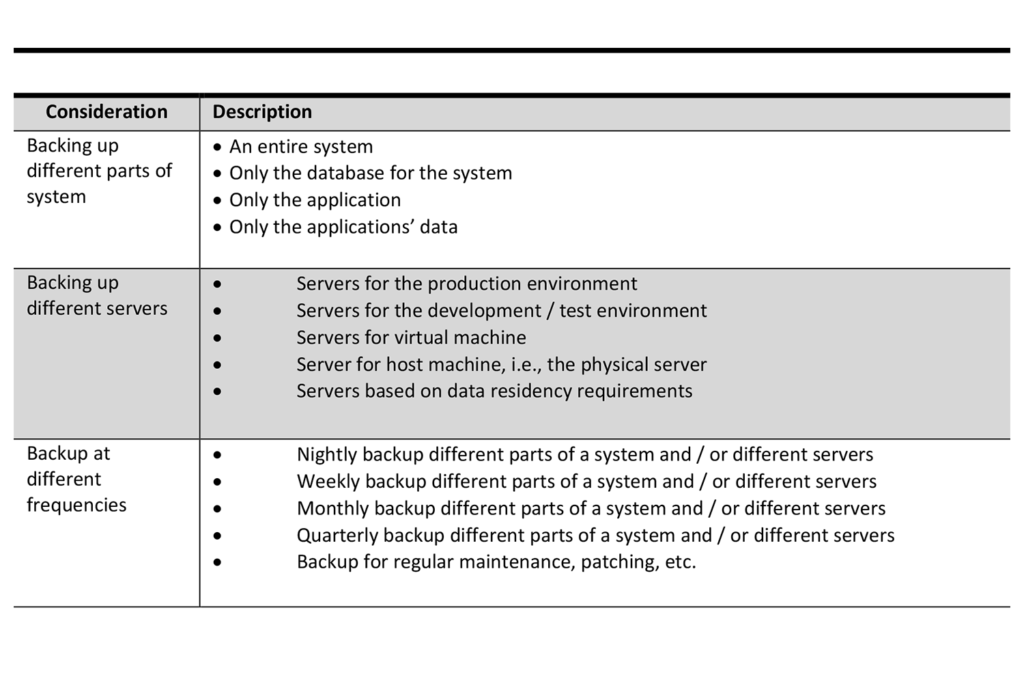

Depending on the incident, planning the response and recovery may require restoring information from backups, which is the second key input for the BC strategy and plan. The cloud service provider will back up servers in the data center as part of its normal operations but may not focus on backing up the organization’s application, data, information, and / or content for products and services. There are numerous technical considerations to backing up the information. Table 4 describes a few of them.

Change Management and User Adoption

The Greek philosopher Heraclitus once said “The only thing that is constant is change,” and this is truer today than ever. Since technological change is so rapid, digital technologies are forcing organizations to embark on DT initiatives that fundamentally change how they operate.

Organizational Change Management (OCM) is not given enough importance in technology implementations, which is unfortunate as OCM has the power to move the needle from failure to success. OCM is about initiating a “mind-shift” in the organization’s culture to accept change. Mark Fields, president of Ford, famously stated “Culture eats strategy for breakfast, lunch and dinner.” In other words, culture is everything. In the context of a DT initiative, an OCM strategy is essential for a successful initiative. OCM challenges the “how things are done” with respect to people, processes, policies, and technologies. The IGBOK (ARMA 2018) states “OCM … is a framework that describes … ‘changes to processes, job roles, organizational structures and type and uses of technology’.” (p 120).

- Change Management for Digital Natives vs Digital Immigrants

Understanding audience is important because change will affect business users’ roles and responsibilities differently. Common audiences are executives, senior management, middle management, administrative users, power users, business users, IT and help desk, and RIM staff. OCM will help all users to accept change, to use new processes, and to adopt new technologies. “When considering the organization’s culture and its mindset to change and adapt, it is useful to identify the organizational structure and the audiences within each structure. It is crucial to understand whether the business teams are comfortable and complacent with the status quo or are looking for ways to improve” (ARMA 2018, p 114).

One consideration of OCM is to how different business users – digital natives versus digital immigrants – learn new technologies. Digital natives, for example, are those who “are all ‘native speakers’ of the digital language of computers, video games and the Internet … [while digital immigrants] … were not born into the digital world but have, at some later point in our lives, become fascinated by and adopted many or most aspects of the new technology” (Prensky 2001, p 1-2). Some research suggests that Baby Boomers, Generation X, Millennials, and Generation Z learn differently because of their ages. Other research questions whether other factors such as cultural, ethnic, and socio-economic backgrounds affect learning more. What is evident is that more recent generations are more comfortable with digital technology and use it differently.

- Status Quo vs Changing Culture

OCM has many models, and each one focuses on a different aspect of organizational change. Part 2 discussed Tuckman’s Five Stages of Group Development. While not strictly related to change management, group development of teams can be influenced by their culture to accept change and their willingness to adopt new technologies. Another model is the “Status Quo vs. Change” that examines organizational change versus technology adoption.

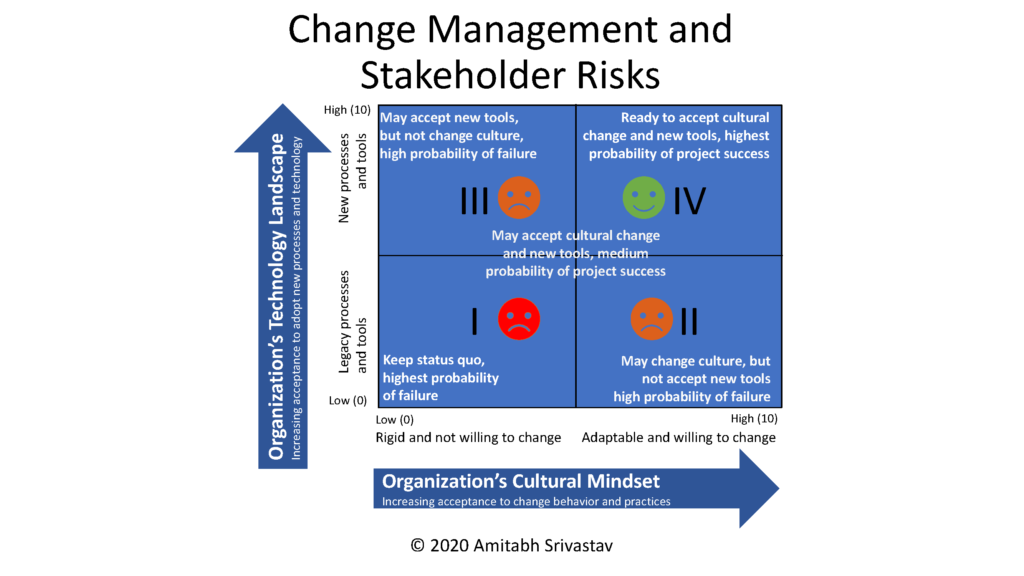

In a DT initiative, the organization must examine OCM and stakeholder risks along two dimensions – cultural “mind-shift” versus using new processes with new technology. First, if the culture is rigid, then changing the culture will be challenging. This is evident in large organizations with well-entrenched bureaucracies that continue to “do things as they were done.” Transformative change is forced onto these organizations only when they realize they must adapt to survive. On the other hand, organizations with an entrepreneurial culture are all about “doing things differently and better.” This is evident especially in start-ups because they need to continuously adapt to survive.

Second, if the culture is rigid, then organizations are prone to using legacy processes and tools. Unfortunately, the imperative to develop new processes that will use new technologies is absent. Furthermore, when organizations implement new processes and technologies, the business users may view the change as a threat, thus introducing conflict between them and management, and even with co-workers who embrace the new processes and technology. Figure 6 illustrates these risk relationships. Quadrants I, II and III represent high probability of failure because the business users do not want to change and do not want to use new technology.

The DT initiative will not be a straight line from Quadrant I to IV because the business users are not willing to change. Instead, the initiative will zig-zag from decreasing risk to increasing risk, and back to decreasing risk. Accordingly, the risk management response will change. For example, the DT initiative’s effort to improve content enablement might face less resistance because the IT department is eager to adopt new cloud-based tools. However, DT related to developing new processes and training to use auto-classification tools in the cloud might face resistance from the business users. So, the risk management response needs to address these two attributes in order to get the DT initiative back on track. Depending on the audiences of business users, the organization’s culture is either ready for a “mind-shift” change or obdurate to change. In OCM, “the soft stuff is always harder than the hard stuff.”

Considerations for the Future and Next Steps

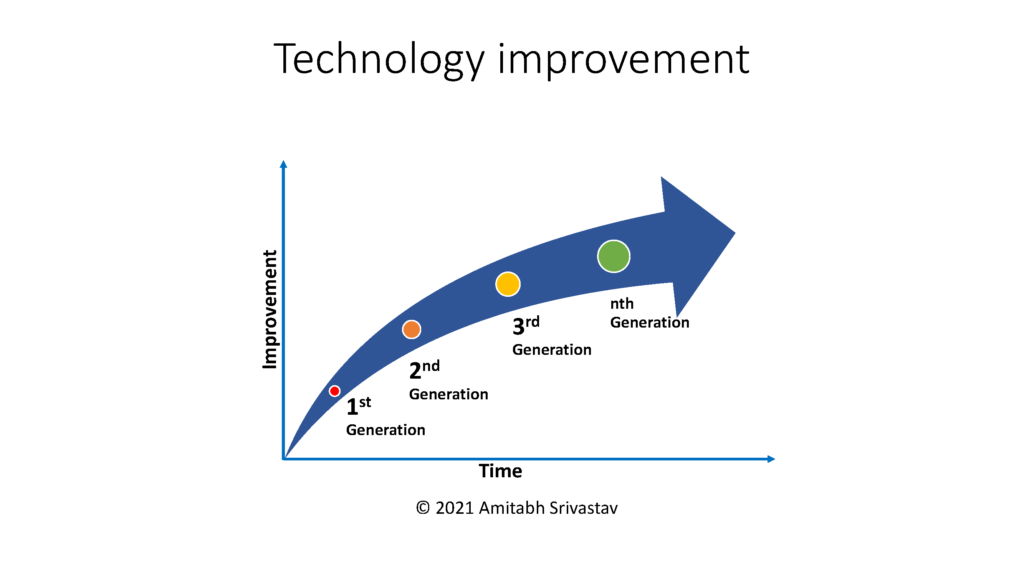

DT is as broad as one’s ability to reimagine the future. Technology is constantly changing and continuously presenting opportunities for it to be used in exciting and innovative ways to deliver new products and services. So, predicting which technologies will become mainstream is a challenge. As with any new DT technology, there is hype as it matures and eventually becomes mainstream through its iterations. See Figure 7. Thus, organizations need to continuously embrace change.

What is certain is that technology is a change disruptor. Therefore, DT is not a single project, but a continuous flow of innovative solutions for business users who will use new technologies with new processes and new policies thereby creating new business models to deliver new products and services to new markets anytime, anywhere on any device. Going forward, organizations need:

- To have a sense of urgency to start their DT journey

- To be clear about the strategic vision of the DT journey when developing the business case

- To allocate significant time and effort for change management and awareness activities

- To identify change champions who can help change the culture and remove barriers to technology adoption

- To celebrate wins and communicate successes often, no matter how small, in order to sustain momentum during the long journey

Key Takeaways

The key takeaway from these articles is that DT is complex, broad, and diverse, but its benefits are significant. While it is true that one benefit of DT is the digitalization of traditional RIM practices, many other benefits are about managing digital content throughout its lifecycle, and delivering the content in the form of new products and services to new markets and new customers. Both customers and employees expect products and services to be available on-demand 24/7, which is only possible via a DT initiative. This means transforming operations that are agile, that can quickly adapt to market and regulatory changes, and that can respond to customer demands. The volume of digital content is exploding and creating information silos. So, another benefit of DT is managing content sprawl, while reducing organizational risks. This means organizations must know what content they are storing, and why; and what content should be disposed. Keeping content beyond its intended business purpose exposes the organization to a plethora of risks from increasing storage costs, data breaches and ransomware attacks to access to information risks, privacy violations, litigation, loss of reputation, etc. The benefit of DT tools for ROT analysis, auto-classification, and auto-routing using AI and RPA is that they can automate content management at scale, which not humanly possible via manual effort. This can help improve IG of content sprawl, reduce information silos, increase collaboration, promote knowledge sharing, search across content repositories, etc. At the same time, DT can use AI and RPA processes at scale to apply retention schedules to content deemed a record and execute disposition actions on those records. This is a tradition RIM activity, but it is now more important in the new reality of DT.

Conclusion

Organizations embarking on their DT journey, or those already on their journey, must be agile in a technology and data driven world as they prepare for the “Internet of Everything.” Therefore, organizations need to adopt a culture of constantly changing and re-imagining their future. This requires vision to develop DT strategies for new business models that deliver increasing business value.

References:

- AICPA/CICA. 2011. AICPA CICA Privacy Maturity Model, American Institute of Certified Public Accounts, Inc. and the Canadian Institute of Chartered Accounts. https://iapp.org/media/pdf/resource_center/aicpa_cica_privacy_maturity_model_final-2011.pdf. Retrieved June 2021.

- ARMA. (2018). Information Governance Body of Knowledge (iGBoK), 1st ed., ARMA International.

- BCI. (2017) BCI Glossary of Business Continuity Terms, Business Continuity Institute. https://www.thebci.org/knowledge/business-continuity-glossary.html. Retrieved June 2021.

- Cavoukian, Ann, Ph.D. (2011) Privacy by Design: The 7 Foundational Principles Implementation and Mapping of Fair Information Practices. Information & Privacy Commission, Ontario, Canada. https://www.ipc.on.ca/wp-content/uploads/resources/pbd-implement-7found-principles.pdf. Retrieved June 2021.

- Fink, Sheri. (2021). Experts Call for Sweeping Reforms to Prevent the Next Pandemic, The New York Times. https://www.nytimes.com/2021/05/12/us/covid-pandemic.html. Retrieved May 2021.

- Frank, John. (2016) Our search warrant case: Microsoft’s commitment to protecting your privacy, EU Policy Blog, Microsoft. https://blogs.microsoft.com/eupolicy/2016/09/05/our-search-warrant-case-microsofts-commitment-to-protecting-your-privacy/. Retrieved June 2021

- Gartner. (2021). Gartner Glossary, https://www.gartner.com/en/information-technology/glossary. Retrieved March 2021.

- Hayes, D., and Cappa, F. and Le-Khac, N. A. (2020). An effective approach to mobile device management: Security and privacy issues associated with mobile applications, Digital Business, Vol 1. Issue 1, September 2020, 100001. https://doi.org/10.1016/j.digbus.2020.100001. Retrieved June 2021.

- ICA. (2008). Principles and Functional Requirements for Records in Electronic Office Environments – Module 2: Guidelines and Functional Requirements for Electronic Records Management Systems, International Council on Archives (ICA). https://www.naa.gov.au/sites/default/files/2019-09/Module%202%20-%20%20ICA-Guidelines-principles%20and%20Functional%20Requirements_tcm16-95419.pdf. Retrieved June 2021.

- Khan, M. Ali-ud-din, Muhammad Fahim Uddin, Navarun Gupta. (2014). Seven V’s of Big Data: Understanding Big Data to extract Value. Proceedings of 2014 Zone 1 Conference of the American Society for Engineering Education (ASEE Zone 1).

- Knight, F.H. (1921). Risk, Uncertainty, and Profit. Cambridge, MA: Hart, Schaffner & Marx; Houghton Mifflin Company.

- Latson, Jennifer. (2014). “Murder in Munich”: A Terrorist Threat Ignored, TIME USA. https://time.com/3223225/munich-anniversary/. Retrieved May 2021.

- Midler, Marisa. (2020). Ransomware as a Service (RaaS) Threats, Software Engineering Institute, Carnegie Mellon University. https://insights.sei.cmu.edu/blog/ransomware-as-a-service-raas-threats/. Retrieved May 2021.

- Midler, Marisa, O’Meara, Kyle, & Parisi, Alexandra. (2020). Current Ransomware Threats, Software Engineering Institute, Carnegie Mellon University. https://resources.sei.cmu.edu/library/asset-view.cfm?assetid=645032. Retrieved May 2021.

- PMI. (2009). Practice Standard for Project Risk Management, Project Management Institute, 2009, p 100.

- Prensky, Marc . (2001). Digital Natives, Digital Immigrants, From On the Horizon (MCB University Press, Vol. 9 No. 5, October 2001. http://marcprensky.com/writing/Prensky%20-%20Digital%20Natives,%20Digital%20Immigrants%20-%20Part1.pdf. Retrieved September 2017.

- Shegda, Karen M., Hobert, Karen A., Woodbridge, Michael, and Basso, Monica. (2016). Reinventing ECM: Introducing Content Services Platforms and Applications, Gartner. https://www.gartner.com/en/documents/3534821/reinventing-ecm-introducing-content-services-platforms-a. Retrieved June 2021.

- Srivastav, Amitabh. (2014). Risk Management and Virtual Teams: An Exploratory Empirical Investigation of Relationships between Perceived Trust and the Perceived Risk of Using Virtual Project Teams. Thesis in Project Management, Université du Québec en Outaouais, 2014. Abstract available at http://di.uqo.ca/id/eprint/719.

- TBS. (2016). Government of Canada Information Technology Strategic Plan 2016-2020, Treasury Board Secretariat, Government of Canada, https://www.canada.ca/en/treasury-board-secretariat/services/information-technology/information-technology-strategy/strategic-plan-2016-2020.html. Retrieved June 2021

- Woodbridge, Michael. (2017). The Death of ECM and Birth of Content Services, Gartner. https://blogs.gartner.com/michael-woodbridge/the-death-of-ecm-and-birth-of-content-services/. Retrieved June 2021.

[1] The acronym MDM can also refer to Master Data Management, but that is not the context in this case.

[2] Some use the term business impact assessment, but the analysis is the assessment of the activities and their impact to disrupt the organization’s business.